In this article, I'd like to tell you a story about how we analyzed our project with the PVS-Studio static code analyzer trying to find out how much we could benefit from this tool. I won't be discussing unique and interesting bugs here. In fact, all the bugs and defects found by the analyzer in our code proved to be quite trivial. What I'd like to talk about instead is my personal opinion of this tool as a project manager. Perhaps this point of view is not that accurate and objective as that of a software engineer because it is affected by the specifics of work management in a particular project, but I still believe that the ideas I share in this article will help those who consider trying static analysis in their work; or those who regularly face big resource expenses on fixing bugs found at the testing stage.

This article was originally published at website habrahabr.ru. This article and its translation republished by the editors' permission.

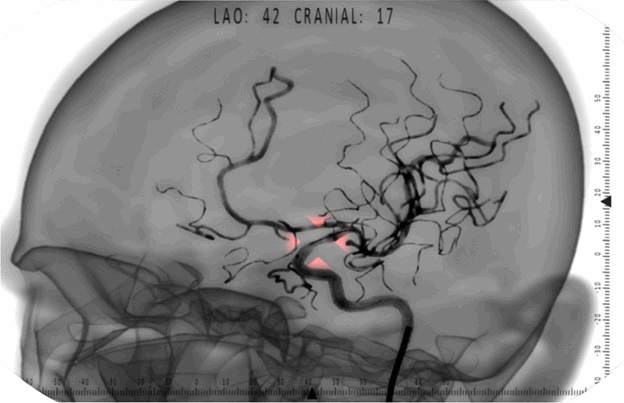

I work at the Eidos-Medicine ltd. company specializing in development of virtual medical training simulators. These are special software-hardware complexes capable of simulating the performance of various surgical interventions as part of an educational process for medical specialists. Using simulators allow medical students and interns to acquire their first practical profession skills before operating on live patients. Our project team develops an X-ray endovascular surgery simulator. This sphere embraces quite a number of various operations on blood vessels carried out under the control of fluoroscopy: angioplasty, stenting, spiral aneurysm embolization, and aortic aneurysm endoprosthesis replacement.

Our current team has been working on this project for one year and a half. The work is running its normal course. Consulting surgeons work with our analyst to coordinate on the surgical intervention tactics step by step and work out the requirements to the visualization system. The 3D artist uses CT-angiography, anatomical atlases, and surgeons' advice to develop new models for the database of medical cases emulated by the simulator. High-level programmers' duty is to implement fluoroscopy visualization, the physics of endovascular instruments movement inside arteries, and the logical analysis of a student's actions on the simulator to monitor the accuracy of accomplishing various intervention stages. Circuit engineers, microcontroller programmers, and design engineers ensure the correct work of various medical equipment emulators used in simulation; reading of data from the sensors and their primary processing and passing into the program. In response, the high-level part of the system prepares the information to be passed into the microcontroller, this information being used for implementing the hardware indication of the virtual intervention workflow and tactile feedback effects meant to make the training process as realistic as possible.

Once the work is done, compiled, soldered, strapped, milled, and assembled, the results are passed to the tester. We basically use manual testing and have very few automatic tests. Over the entire process of new version development, the tester checks on his computer the existing program revisions for the performance, stability, and operation correctness parameters. It enables us to intercept any dangerous commits in time, for we have pretty lengthy iterations per version. However, the major testing of the release candidate is performed on the simulator itself. This stage often involves certain specific issues. For example, there may be faults due to misunderstanding regarding the controller-communication protocol to be used; or the dynamics of simulated instruments movement on the simulator may be slightly different from the debugging keyboard control, and this "slightly" in fact results in critical issues with the physics engine; or some third-party libraries used by the new version are missing from the distribution. There are plenty of unpleasant surprises that may emerge in the process, but the top leaders are, of course, heisenbugs, resulting in program crashes or critical issues preventing a student from accomplishing the task on the simulator in normal way.

However, simple and easy-to-detect bugs also take quite a while to find and fix. When adding new features into the program, new bugs often slip in the code, too. Most of them are caught while working on the version, in the course of daily regression testing. On discovering a new bug, the tester must find out which developer is responsible for it (which is, by the way, not always easy) and create a bug-fixing task for this programmer in Redmine. Once the programmer has solved the issue and committed the fix, some additional checks are required to make sure that the task is really solved and can be closed. All of this summed up, it makes at least half a person-hour to solve a most trivial case, i.e. when the bug can be quickly and easily reproduced and the programmer can quickly figure out the reasons behind it and ways to fix the code. And if a bug takes 20-30 minutes to reproduce, it will result in a two person-hour loss even for a quickest and most trivial fix. That's quite a lot indeed. And the worst thing about it is that most of these bugs are caused by mere inattentiveness.

It was not my idea to try a static code analyzer on our project. It was suggested by a colleague of mine after he had visited the "C++ Russia" conference where he had met the guys from PVS-Studio. I took a pause to think it over and finish with the current release, and finally decided to give it a try. I contacted PVS-Studio's developers via e-mail and after exchanging a few emails, they granted me a registration key for two weeks, after which we set about analyzing our project.

Now I should say a few words about the peculiarities of the project architecture. We do not have much C++ code as such. It embraces about fifty libraries in total but some of them contain literally a few dozens of code lines. A significant part of the program logic is concentrated in the graphics engine environment. C++ code is integrated into the project through DLL's. This is the way we implement some specific features absent in the graphics engine environment. Besides, we take out into DLL's any complex or resource-intensive dynamic framing or polygon meshing algorithms for rendering endovascular catheters and conductors, heartbeat simulation, and breathing movements. We also use C++ to implement the logic of surgical intervention simulation exercises to monitor the operation workflow through the intervention steps and correctness of the student's actions. In total, our project includes a few small libraries in C++ plus several middle-sized (2-3 thousand code lines) ones. There are no interesting static analysis tools available to test the part of the program logic concentrated in the graphics engine environment, so we managed to only partially analyze our project with PVS-Studio.

PVS-Studio was very easy and fast to install on my computer, after which it integrated into Visual Studio 2013. Andrey Karpov from the PVS-Studio team sent me via e-mail the links to the user's manual and something like Quiq Start Guide, which wasn't really necessary because the analyzer's interface and features can be learned through mere intuition and the guess-and-try method.

15 minutes later, I was already analyzing the code of a DLL responsible for modeling the process of radiocontrast agent spreading through arteries. This library contains about 4 thousand code lines. I was a bit surprised to know that the analyzer hadn't found any single first-level error in the solution. Well, on the other hand, it had been already tested for many dozens of hours and had been stable lately. So what does the analyzer draw our attention to in this code?

V550 An odd precise comparison: t != 0. It's probably better to use a comparison with defined precision: fabs(A - B) > Epsilon. objectextractpart.cpp 3401

D3DXVECTOR3 N = VectorMultiplication(

VectorMultiplication(V-VP, VN), VN);

float t = Qsqrt(Scalar(N, N));

if (t!=0)

{

N/=t;

V = V - N * DistPointToSurface(V, VP, N);

}Errors like this are found pretty often in this library. It's not a surprise actually, for I had already come across incorrect floating-point number handling in this project. But we had had no spare resources to search through the code for this type of bugs on a regular basis. After studying the analysis results, I realized we needed to recommend some reading on the subject to our programmer responsible for this code, so that he could get better at handling floating-point numbers. I already sent him the links to a couple of nice articles. We'll wait and see what comes of this. I can't say for sure if this bug really causes any real troubles in the program. The current solution sets a number of requirements to the original mesh of the arteries through which radiocontrast agent spreading is simulated. If these requirements are not followed, the program may crash or work improperly. Some of these requirements were worked out through analysis and others through experience. I won't be surprised if we find out that the latter portion of requirements is constantly growing because of that very incorrect floating-point number handling. I should also add that not all the cases of raw comparison of floating-point numbers were real errors.

V807 Decreased performance. Consider creating a reference to avoid using the 'Duct.TR[cIT]' expression repeatedly. objectextractpart.cpp 2689

for (k = 0; k < Duct.LIsize; k++)

{

cIT = Duct.ListIT[k];

if(DuctMain.TR[cIT].inScreen &&(Duct.TR[cIT].PNum > OneDev512))

{

tuv[0].y = Duct.TR[cIT].v0 * Duct.TR[cIT].PNum;

....

}

....

}There were about 20 messages of this kind in the solution. Interestingly, this library has very high performance requirements. In earlier times, we used to count every multiplication operation and seek every opportunity to save resources in functions processing vectors and matrices. The loop in the code above runs through a great number of iterations - up to several dozens of thousands. It is included into the algorithms of the particles system which provides the angiography rendering. There are certain intricacies about visualizing the radiocontrast agent in the fluoroscopy image which have to do with the fact that blood vessels oriented at right angle to the frame plane look darker. X-rays in this case follow along the vessel, i.e. through a thick layer of absorbing medium, and therefore grow weaker and affect the film lesser in this projection. This effect is implemented in our program through a system of semi-transparent particles distributed inside the artery polygon mesh. Polygon meshes in our program are of a very high resolution; consequently, the amount of particles is also huge. It would be interesting to carry out an experiment to find out if we can win a millisecond or two by fixing these untidy code fragments. The compiler probably does this optimization automatically, but why not try forcing it?

V669 Message: The 'cIT', 'j' arguments are non-constant references. The analyzer is unable to determine the position at which this argument is being modified. It is possible that the function contains an error. objectextractpart.cpp 2406

D3DXVECTOR3

ObjectExtractPart::GetD(D3Object& Duct, int& cIT, int& j){

return DuctMain.VP[DuctMain.TR[cIT].IP[2]].P

+ (

DuctMain.VP[DuctMain.TR[cIT].IP[0]].P

- DuctMain.VP[DuctMain.TR[cIT].IP[2]].P + (

DuctMain.VP[DuctMain.TR[cIT].IP[1]].P

- DuctMain.VP[DuctMain.TR[cIT].IP[0]].P

) * Duct.TR[cIT].tt[j].x

) * Duct.TR[cIT].tt[j].y

+ DuctMain.TR[cIT].CNR * Duct.TR[cIT].tt[j].z;

}This code sample is correct. The programmer only made a mistake in the function parameter declaration: the parameters should have been const int&.

Having detected surprisingly few critical errors in the first solution picked for analysis, we passed on to another one which is more actively developing at present. This subject consists of eight libraries used to pass virtual intervention workflow data from the graphics engine into the code of the logic of the surgical intervention simulation exercises. The same libraries also enable data transfer in the opposite direction - for example to inform about the student's mistakes or to signal intervention stage accomplishment. What follows from it is the fact that the logic of the exercises themselves can be written solely in C++, without dealing with the graphics engine environment.

This time, we have picked a richer harvest of bugs, and there even were a couple of really dangerous issues among them:

V595 Message: The '_idiChannel' pointer was utilized before it was verified against nullptr. Check lines: 917, 918. logicinterface.cpp 917

int instType =

_idiChannel->GetActiveInstrumentTypeInGroup(instrumentId);

if (_alogChannel != NULL && _idiChannel != NULL) {

....

}This is the place where the program can potentially crash. Earlier testing failed to reveal this error because the _idiChannel pointer had always appeared non-NULL up to now in the current program version. But it cannot be guaranteed that it will stay the same in the course of further development, so this bug might well show up one day.

V688 The 'chCameraMatrix' local variable possesses the same name as one of the class members, which can result in a confusion. angiographlog.cpp 323

class ANGIOGRAPHLOG_API AngiographLog: public ILogic

{

....

Aco_Matrix* chCameraMatrix;

Aco_Matrix* chProjectionMatrix;

....

}

D3DXMATRIX AngiographLog::GetCameraMatrix() {

D3DXMATRIX res;

Aco_Matrix* chCameraMatrix=(Aco_Matrix*)GetChild(CameraMatrix);

if ( chCameraMatrix != NULL) {

res = chCameraMatrix->GetMatrix();

}

return res;

}The analyzer found four warnings of this kind in different files of this solution. In this case, it didn't result in any issues. But it could have mislead a maintaining programmer someday and made them use uninitialized pointers.

V522 Dereferencing of the null pointer 'chInstrumentSubLineLengthIn' might take place. instrumentdatainterface.cpp 239

D3DXVECTOR3 InstrumentDataInterface::GetSubLineEndPos(....)

{

....

if(chInstrumentSubLineLengthIn != NULL)

chInstrumentSubLineLengthIn->SetFloat(subLineLengthIn);

else

chInstrumentSubLineLengthIn->SetFloat(0.0F);

....

}As for this code, I suppose that the programmer first wrote the first two code lines. Then he was distracted - perhaps by something important. Anyway, on getting back to the task, he wrote some obvious nonsense. Such things do happen. But it resulted in the code having a dangerous spot where the program may crash.

Dangerous fragments related to pointers were found in other libraries as well:

V614 Potentially uninitialized pointer 'tabAntiPowerSpheres' used. getnewposbyheartbeat.cpp 175

void GetNewPosByHeartBeat::_precalc()

{

....

STL_Table *stlAntiPowerSpheres;

CSTL_Table *tabAntiPowerSpheres;

stlAntiPowerSpheres = (STL_Table *)GetChild(....);

if (stlAntiPowerSpheres != NULL)

tabAntiPowerSpheres = stlAntiPowerSpheres->getSTL_Table();

if (tabAntiPowerSpheres != NULL)

{

int tableSize = tabAntiPowerSpheres->getRowCount();

....

}

....

}This time, the bug is a bit less obvious. If stlAntiPowerSpheres appears to be NULL, then tabAntiPowerSpheres remains uninitialized and points to a random memory area. The NULL check will be passed successfully followed by a program crash when trying to access the object fields. This issue has failed to be revealed by testing - probably due to the same reasons as the (STL_Table *)GetChild(CH_ANTIPOWER_SPHERES) call would evaluate to non-NULL earlier throughout the code.

At last I decided to run the analyzer on one solution which hadn't been tested yet and is still being developed and not yet integrated into the main project. Within this solution, we are working on our own physics engine of a flexible cord. There were more bugs this time. Here is, for example, one funny sample:

V527 It is odd that the false value is assigned to 'bool' type pointer. Probably meant: *outIsInScene = false. rpscene.cpp 79

bool rpScene::CheckIsRopeInScene(...., bool* outIsInScene)

{

if (mEngine == NULL)

{

outIsInScene = false;

return false;

}

else

{

*outIsInScene = mEngine->CheckIsRopeInScene(ropeToCheck);

return true;

}

}As for this case, I should note that the analyzer is only partially right. The outIsInScene parameter should not be represented by a pointer at all. But still thanks to PVS-Studio for pointing out this suspicious code fragment which has proved to be a real bug.

I won't cite all the warnings here. Just two more ones worth mentioning, to wind up the story.

V501 There are identical sub-expressions '(fabs(crossVect.x) > 1.192092896e-07F)' to the left and to the right of the '||' operator. rpmath.h 103

inline bool IsCollinearVectors(Vector3d vect1, Vector3d vect2)

{

Vector3d crossVect = Vector3dMultiply(vect1, vect2);

//checking vector for approaching zero;

return !((fabs(crossVect.x) > FLT_EPSILON) ||

(fabs(crossVect.y) > FLT_EPSILON) ||

(fabs(crossVect.x) > FLT_EPSILON));

}On the one hand, we are dealing with an ordinary error caused by the programmer's inattentiveness. On the other hand, though, a bug of this kind would be very difficult to catch if we were checking the program execution result in general instead of testing the performance of individual methods. This function checks two vectors for collinearity. For example, if the vector of a potential displacement of a flexible-string point, this displacement crossing the collision object, appears to be, with certain tolerance, collinear to the normal of the collision object's surface in the intersection point, this will affect the bounce calculation algorithm. But since there are a lot of interrelated factors affecting the physical model, it's not always possible to say, while watching the running program, what exactly has caused a particular type of inadequate behavior. This bug could have stayed unnoticed for a long time but for PVS-Studio.

There was also one more interesting warning by the analyzer. I didn't even get it at first because the analyzer was anxious about something in a string literal, not the code itself:

V691 Empirical analysis. It is possible that a typo is present inside the string literal: "out_Radius". The 'RADIUS' word is suspicious. rpropeinstancecommand.cpp 93

....

mCommandsDescriptions[currCommandNr].name =

"Get Rope Fragments Count(Rope;out_Count)";

....

mCommandsDescriptions[currCommandNr].

params[PARAM_NR_FRAGMENTS_COUNT].name = "out_Radius";

....But then we figured that the analyzer had been right and there should have been a different string literal indeed. The "out_Radius" line in this code resulted from the copy-paste of some earlier fragment. After that, the programmer made all the necessary edits except that he forgot to replace the string literal with the more appropriate "out_Count".

This is the code fragment that had been cloned:

....

mCommandsDescriptions[currCommandNr].name =

"Get Rope Fragment Radius(Rope; in_FragmentNr;out_Radius)";

....

mCommandsDescriptions[currCommandNr].

params[PARAM_NR_FRAGMENT_RADIUS].name = "out_Radius";

....A single-time check like that is of little use of course. The existing code has already made its way through pretty long testing, so there have been very few bugs; and among those few, many don't affect the code in the normal work mode. Are we going to purchase PVS-Studio licenses now? Personally I look positively on integrating a tool like that into our project. Obviously, using static analysis would gain us some spare resources both of the tester and developers. There would be fewer tasks marked as "Error" in Redmine, and solved tasks would be rejected by testers much rarer. Nevertheless, before making the final decision, we need to estimate the exact profit we'll gain from using PVS-Studio and compare it to the price of the product itself. What greatly affects the estimate is the fact that we have relatively little dynamically developed C++ code in our project. So for now we are going on without the analyzer.

I also shared the temporary PVS-Studio registration key with the developers from other project teams of the Eidos-Medicine ltd. company. I wanted them to try it and decide if they needed a tool like that in their work. Here are a few responses of theirs:

Andrey Karpov commented on the last response and asked me to cite his comment in this article:

"This is an inefficient way of using the tool, against which we warn our readers in almost every article. To put it brief, the earlier a bug is found, the better. There is no use hunting a typo in a debugger when you could have found it by static analysis immediately after compilation.

If the reason for not using the analyzer regularly is its low performance, please check the tips on how to speed it up. It may help. If not, there is always a way out through arranging automatic nightly checks (we can advise on how to do it better).

If the reason is a too large number of warnings, you can try hiding all the warnings for old code and work with new ones only (how to integrate static analysis into a large-scale project)."

0