I don't like it when people use artificial code examples to evaluate the diagnostic capabilities of static code analyzers. There is one particular example I'm going to discuss to explain my negative attitude to synthetic tests.

Bill Torpey recently wrote a blog post entitled "Even Mo' Static", where he shared his view on the results of testing Cppcheck and PVS-Studio analyzers on the itc-benchmarks project, which is a set of static analysis benchmarks by Toyota ITC.

That post upset me because it would leave you with an impression that Cppcheck's and PVS-Studio's capabilities were very similar. What follows from the article is that one analyzer is better at diagnosing some types of errors and the other, at diagnosing other types of errors, but their capabilities are generally the same.

I think it's a wrong conclusion. My opinion is that our analyzer, PVS-Studio, is several times more powerful than Cppcheck. Well, it's not even an "opinion" - it's what I know for sure!

However, since it's not obvious to an outside observer that PVS-Studio is ten times better than Cppcheck, there must be a reason for that. I decided to take a look at that project, itc-benchmarks, and figure out why PVS-Studio didn't perform at its best on that code base.

The more I was digging, the greater irritation I felt. There was one particular example that drove me really crazy, and I'm going to tell you about it in a moment. What I have to say as a conclusion is this: I have no complaints against Bill Torpey. He wrote a good, honest article. Thank you, Bill! But I do have complaints against Toyota ITC. I personally think their code base is crap. Yes, it's a blunt statement, but I believe I have enough competence and experience to debate about static code analyzers and ways of evaluating them. In my opinion, itc-benchmarks can't be used to adequately evaluate tools' diagnostic capabilities.

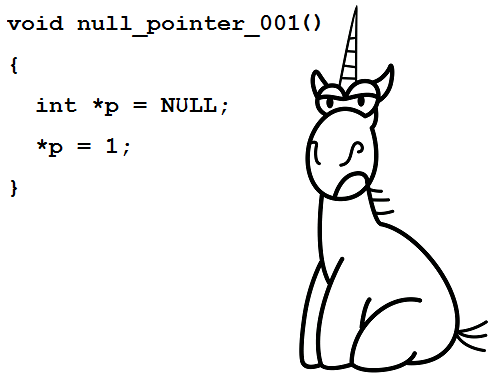

Now, here's the test that killed me.

It's a test for null pointer dereference:

void null_pointer_001 ()

{

int *p = NULL;

*p = 1; /*Tool should detect this line as error*/

/*ERROR:NULL pointer dereference*/

}Cppcheck analyzer reports an error in this code:

Null pointer dereference: pPVS-Studio analyzer keeps silent, although it does have diagnostic V522 for cases like that.

So, does it mean that PVS-Studio is worse at diagnosing this example than Cppcheck? No, it's just the opposite: it's better!

PVS-Studio understands that this code was written on purpose and there is no error there.

In certain cases, programmers write code like that intentionally to make the program throw an exception when a null pointer dereference occurs. This trick is used in tests and specific code fragments, and I have seen it more than once. Here's, for example, how it can be in a real-life project:

void GpuChildThread::OnCrash() {

LOG(INFO) << "GPU: Simulating GPU crash";

// Good bye, cruel world.

volatile int* it_s_the_end_of_the_world_as_we_know_it = NULL;

*it_s_the_end_of_the_world_as_we_know_it = 0xdead;

}That's why we have included a number of exceptions into PVS-Studio's V522 diagnostic rule so that it doesn't go mad about code like that. The analyzer understands that null_pointer_001 is an artificial function; there are just no errors that deal with assigning zero to a pointer and then immediately dereferencing it in real functions. The function name itself is also a sign for the analyzer that the "null pointer" here is not an accident.

For cases like that, the V522 diagnostic has exception A6. It is this exception that synthetic function null_pointer_001 falls under. This is the description of the A6 exception:

The variable is dereferenced in the body of a function whose name contains one of the following words:

Before being dereferenced, the variable is assigned 0 one line earlier.

The synthetic test in question totally fits into this description. Firstly, the function name contains the word "null". Secondly, the variable is assigned zero exactly one line earlier. The exception revealed unreal code, which it really is because it's a synthetic test.

It's for these subtle details that I dislike synthetic tests!

It's not the only complaint I have against itc-benchmarks. For example, there is another test in the same file:

void null_pointer_006 ()

{

int *p;

p = (int *)(intptr_t)rand();

*p = 1; /*Tool should detect this line as error*/

/*ERROR:NULL pointer dereference*/

}The rand function can return 0, which will then turn into NULL. PVS-Studio analyzer doesn't know yet what rand can return, so it has no suspicions about this code.

I asked my colleagues to teach the analyzer to better understand how exactly function rand works. There's no choice; we have to smooth the tool manually so that it could do better on the test base in question. We are forced to do it, since people use test suits like that to evaluate analyzers.

But don't you worry. I promise that we will be still working on real-life, useful diagnostics as before instead of adapting the analyzer for tests. We might polish PVS-Studio slightly for itc-benchmarks, but not as a top-priority task and only for those cases that do make at least some sense.

I want developers to understand that the example with rand does not actually show anything. It's synthetic, totally far-fetched. No one writes programs that way; there are no real errors like that.

By the way, if the rand function returns 1400 instead of 0, it won't be any better. A pointer like that can't be dereferenced in any case. So, this null pointer dereference is some strange private case of completely incorrect code, which was simply made up by the suite authors and which you are never going to see in reality.

I know what the real programming problems are. These are, among others, typos, and our tool is regularly catching hundreds of them using, say, diagnostic V501. It's funny, but I haven't found a test in itc-benchmarks that checks if tools can spot the "if (a.x == a.x)" typo pattern. Not a single test!

It turns out that itc-benchmarks ignores the analyzers' typo-search capabilities, while our readers surely know how widespread defects of this type are. And what that project does have is test cases that I find stupid and that are never found in real programs. I can't imagine stumbling upon code like the one below, resulting in an array overrun, in a real, serious project:

void overrun_st_014 ()

{

int buf[5];

int index;

index = rand();

buf[index] = 1; /*Tool should detect this line as error*/

/*ERROR: buffer overrun */

sink = buf[idx];

}The only type of programs where you could probably find that is students' programming exercises.

At the same time, I do know that you are very likely to come across the following typo in a serious project:

return (!strcmp (a->v.val_vms_delta.lbl1,

b->v.val_vms_delta.lbl1)

&& !strcmp (a->v.val_vms_delta.lbl1,

b->v.val_vms_delta.lbl1));This error was found by PVS-Studio in GCC compiler's code: the same strings are compared twice.

So, the suite includes tests for diagnosing exotic code with rand but zero tests for classic typos.

I could go on and on, but I'd rather stop. I've let off steam and feel better now. Thank you for reading. Now I have an article to support my opinion about synthetic error bases.

Welcome to install and try a most powerful code analyzer PVS-Studio.

References:

0