Today we are going to depart from the classical cliché of second-rate detectives and will tell you about the case from our experience, when the butler himself became a victim and a search for criminals led us to an unexpected result. But don't be scared. Of course, not the real people, but the programs will be described. Why are we not scared to admit our own mistakes? We certainly know that not a single team is insured from making them.

Let me start with a little bit of theory. We develop a PVS-Studio static analyzer for C, C++, and C# code. For internal testing of our product, we use a variety of tools and techniques: Unit tests and Visual Studio UI-tests, a joint review of code, specialized tester application. I would like to comment in more detail on the last point.

When developing new diagnostic rules, as well as when you change the internal mechanisms of the analyzer, it is always necessary to understand the way these changes affected the quality of analysis. For this task, we perform test runs of the analyzer on a set of large open source projects. For C/C++ code we use about 150 projects, and 52 projects are used for C#. A tester app for analyzing C/C++ projects under Windows is called SelfTester. Further on, we will talk exactly about this tool. There is also a specialized system for Linux, but for now it will remain behind the scenes.

All work is performed on a build server with Windows 10. As a build system we use Jenkins which is configured to run nightly on a regular basis, among other things, the testers. The run of Jenkins itself is performed from cmd-file with a command:

java -jar jenkins.war >

%JENKINS_PROJECTS%\Logs\%YYYY%.%MM%.%DD%_%HH%.%MI%.log 2>&1The JENKINS_PROJECTS variable specifies the location of the Jenkins project folder on the local disk. Variables YYYY, MM, DD, HH and MI contain the date and time at the moment of executing, respectively. Thus, after launching Jenkins, the output of its console will be redirected to the file with the name of the form:

2017.11.08_17.58.logCmd-file starts with the help of a Windows Task Scheduler. A launch of Jenkins as a Windows service turned out to be inapplicable for us, since in this case there were problems in the work of UI tests of Visual Studio: the service does not have a desktop. Therefore, the invocation chain looks like this:

Windows Task Manager -> cmd.exe -> java.exeSo, having read the short introduction, let's move on to the question of what went wrong.

About six months ago, we began to notice that Jenkins is closed during the night builds from time to time. As a result, it had to re-run be manually, we had to check the logs, and restart some tasks. The problem was fairly regular. The investigation was started with exploration of Jenkins behaviour, because it is Jenkins, which does all the "dirty work", and therefore is the first to fall under suspicion. Yes, that very butler.

First and foremost we checked the configuration and customization of Jenkins tasks. The check did not find anything serious. Moreover, if an error was made, for example, in the order, priority or start time of the tasks, then the problem would probably reveal itself in a different way. It is also unlikely that Jenkins will crash due to configuration errors.

As a temporary measure, a task of a Windows scheduler was reconfigured to restart Jenkins every 30 minutes. For protection against starting a second instance, a check for the existence of an already running Jenkins process was also performed.

The next step was to examine the console logs of Jenkins. As you probably remember, they are saved in the folder %JENKINS_PROJECTS%\Logs, and the file names contain a time stamp of creation (in fact - time of Jenkins restart). After a while, we have studied the contents of this folder and, as expected, found a fairly large number of files. It showed that Jenkins continued to restart. At the same time, Jenkins console logs did not contain anything criminal. The problem became somewhat different: a part of the nightly tests stopped being launched. They just were not starting. However, those that did, often terminated with errors.

All this spoke in favor of the fact that Jenkins was, probably, not to blame. Someone from the outside hindered its work, by "killing" the java.exe process.

The next chosen suspect was Windows. Well, in fact, who will take the responsibility for an unplanned processes termination if not the operating system itself? Perhaps, the system reloads after installing updates, or for some reason closes only the java.exe process. Such situation is possible when someone hinders the restart process and if the restart is not happening, but the system has already managed to kill several of the running processes. One way or another, but the analysis of Windows logs gave no results. Yes, sometimes the system really reloaded, but everything was going as usual. In addition, time and place of the "crime" did not match.

Around the same time, when we were conducting our investigation, another incident happened, which was not given much importance immediately. One night, our build server crashed due to BSOD. Automatic restart did not happen, so in the morning we could see a very bleak picture.

To analyze a memory dump we used Windows Debugger (WinDbg). This utility was found by the path "C:\Program Files (x86)\Windows Kits\10\Debuggers\x64\windbg.exe". The dump was located in itsregular place: "C:\Windows\MEMORY. DMP ". After the dump analysis in WinDbg, the reason of BSOD was discovered:

CRITICAL_PROCESS_DIED (ef)

CRITICAL_PROCESS: svchost.exe

....

FAILURE_BUCKET_ID:

0xEF_svchost.exe_BUGCHECK_CRITICAL_PROCESS_TERMINATED_BY_

SelfTester.exe_6771c7c0As you probably know, a forced termination of the instances of svchost.exe process usually results in BSOD. You can check it yourself.

Yes, our impeccable SelfTester tool led to the collapse of Windows. However, as it was the first such incident for all the long history of existence of the tester, and it has been a long time since the last edits were made to its code, we decided, that it was just an accident.

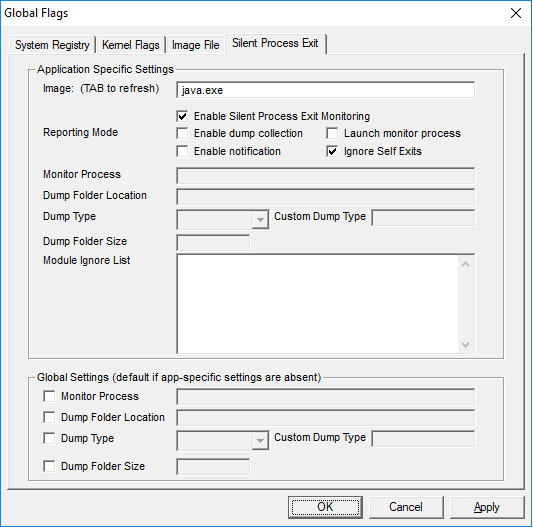

In the meantime, the investigation was going on as usual. To determine the perpetrator of java.exe process termination, a native Windows utility "Global Flags" for configuring the registry keys has been used. Among other things, it allows to set the monitoring mode for the exiting of the specified process, together with adding a record of such events in the system log:

In case of a forced termination of a process by a third-party process, the Windows Application log should contain an event with an Event ID = 3001 from the 'Process Exit Monitor' source.

As it often happens in such cases, you just set a trap but the victim takes cover. The next couple of weeks the server worked perfectly, the tasks were successfully performed and a Windows log did not contain the expected events.

Finally, one fine morning the problem repeated itself and the following entry appeared in the system log :

The process 'C:\Program Files\java\jre1.8.0_102\bin\java.exe' was terminated by the process 'D:\SelfTester\SelfTester.exe' with termination code 0. The creation time for the exiting process was 0x01d3541edc4f867b.

The SelfTester Again. It appears that it was the SelfTester to blame that the process java.exe was terminated, which, in turn, itself launched the Selftester process. An incredible cunning.

To find a perpetrator is just a half of the story. Now you should understand exactly what is happening and how to fix it. To do this, let's examine how the tester works.

To analyze each C++ project, the tester runs the process of the analyzer (PVS-Studio_cmd.exe) with parameters. Upon completion of the analysis, the tester does not wait for the termination of the analyzer process, but responds to an event that a report of the analysis (the plog file) is created. After that, the tester terminates the analyzer process and all of its child processes, "just in case". Probably, at this point, something unexpected occurs.

Actually, this operation algorithm is the legacy of the past and now it is no longer necessary. Previously, the devenv.exe process with parameters was started for the analysis. The analysis was performed by the PVS-Studio plugin for Visual Studio. During this, many child processes were generated which had to be closed.

To understand what exactly was going on, we had to add logging to the process termination logic. After examining the logs, we managed to find out the following: the child processes for PVS-Studio_cmd.exe in the tester are discovered using the following WMI query:

SELECT * FROM Win32_Process WHERE ParentProcessId={0}The problem is that at the time of this query execution, the process might not be running anymore. The analyzer process honestly performed its tasks and exited but the tester does not know about it. Sometimes it happens, that the ID of the analyzer process is identical (was re-used) to the ID of a process that originally launched the cmd.exe, which in turn, launched the java.exe process (that is, the Task Scheduler process). Windows remembers the ID of a parent processes, even if it has already exited. This way, we are getting at this cmd.exe process and terminating it, and all of its child processes (recursively).

The issue was solved by adding two conditions in the tester code under which it could not terminate child processes:

Therefore, the investigation is over. All suspicions are dismissed from the innocents and the identified perpetrators and severely punished (fixed).

In conclusion, I would like to note that this situation is, in my opinion, a fine example of how even a small mistake can lead to an error that is difficult to reproduce.

We are not ashamed to admit our mistakes and, as I think, it was interesting for readers to get acquainted with one of them.

We wish you bugless code and successful builds!

0