We have been asked many times about the degree of overlapping between diagnostics of our PVS-Studio analyzer and the Cppcheck analyzer. I've decided to write a small article about it to refer to as a quick answer in future. To put it brief, the overlapping is very little – only 6% of the total number of errors is detected by both analyzers. In this article, I will tell you how we got this figure.

At first I planned to draw a Venn diagram, as neat circles. But it appeared to be a tough task. You see, Excel draws circles without taking into account their areas, while programs that can draw correct proportional diagrams are paid. So I had to get along with squares which required only a calculator, pen, paper and the Paint editor.

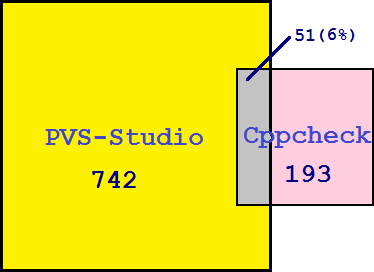

Figure 1. Graphic representation of the number of errors found by the PVS-Studio and Cppcheck analyzers.

The areas of the squares are proportional to the number of errors found by each analyzer. The gray rectangle represents the number of errors found by both analyzers.

So we've got the following results:

Now, where have we taken these data from? In March of 2014, we carried out a large comparison of code analyzers: PVS-Studio, Cppcheck, and Visual Studio:

Some of our readers heavily criticized those results. But we are sure that the largest portion of that criticism was caused by people only reading the brief report without attentively studying the article describing the comparison procedure itself.

Since the PVS-Studio analyzer had proved much better than Cppcheck, some readers decided we had been cheating. But we hadn't actually. PVS-Studio is truly a more powerful tool than Cppcheck, and I don't see anything strange and unexpected about that. Commercial tools are generally better than free ones. By the way, the high quality of our comparison was confirmed by the Cppcheck analyzer's author himself. We will publish an article with his letter someday and also answer a number of questions asked by the readers after publishing that comparison report.

Let's get back to our overlapping of diagnostics. As you can see from the diagram, it is pretty little, but that's no wonder. First, no one would copy diagnostics for their analyzer from another tool. Overlapping is found among frequently occurring errors with evident patterns. That is, diagnostics for these errors are implemented independently by different authors.

Second, the era of static analyzers is only starting to dawn. There is an incredible amount of error patterns they can diagnose – hence so little overlapping. One analyzer focuses on errors of one type, another one focuses on some other errors, and so on. The overlapping will gradually grow in time of course, but error patterns are so numerous that it's going to be a slow process. Moreover, analyzers' scope of use will only be getting broader with the release of the C++11 and C++14 standards.

0